Google’s Secret Recipe for AI Awesomeness, “Gemma 2” is the latest AI sensation that’s got developers and researchers buzzing with excitement. If you thought the original Gemma was cool, prepare to have your mind blown by its supercharged successor.

Gemma 2: The New Kid on the Block

Let’s cut to the chase – Gemma 2 is here, and it’s not just an incremental update. This bad boy is a full-blown AI revolution packed into a surprisingly compact package. Google’s brainchild has hit the scene with two flavor options: a nimble 9 billion parameter model for those who like their AI lean and mean, and a beefier 27 billion parameter version for the power users out there.

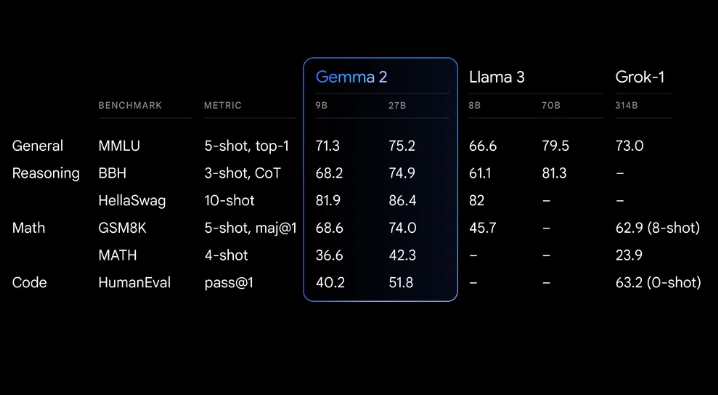

But here’s the kicker – these models aren’t just about raw numbers. They’re punching way above their weight class, giving much larger proprietary models a run for their money. We’re talking David vs. Goliath levels of performance here, folks.

Under the Hood: What Makes Gemma 2 Tick?

Alright, let’s get our hands dirty and peek under the hood of this AI beast. Gemma 2 isn’t just a rehash of its predecessor – it’s been built from the ground up with a completely redesigned architecture. The Google boffins have been burning the midnight oil to create something that’s not just powerful, but also incredibly efficient.

Imagine having the performance of a supercar with the fuel economy of a hybrid – that’s Gemma 2 in a nutshell. It’s been engineered to deliver top-notch performance while sipping resources like a connoisseur at a wine tasting. This means you can run the 27B model on a single NVIDIA H100 Tensor Core GPU or TPU host without breaking a sweat (or your bank account).

The Swiss Army Knife of AI Models

One of the coolest things about Gemma 2 is its versatility. Whether you’re a solo developer tinkering on your gaming laptop or a research team with access to cloud-based supercomputers, Gemma 2 has got you covered. It’s like the Swiss Army knife of AI models – adaptable, reliable, and always ready for action.

Want to run it at full precision? No problem, just fire up Google AI Studio. Prefer to keep things local? The quantized version with Gemma.cpp has got your back. And for those of you rocking NVIDIA RTX or GeForce RTX setups, Hugging Face Transformers is your new best friend.

The Gemma 2 Ecosystem: A Developer’s Paradise

Now, let’s talk about integration. Gemma 2 isn’t some prima donna that only plays nice with specific tools. Oh no, this AI is a team player through and through. It’s compatible with all the major AI frameworks you know and love – Hugging Face Transformers, JAX, PyTorch, TensorFlow via native Keras 3.0, vLLM, Gemma.cpp, Llama.cpp, and Ollama. It’s like the popular kid at school who gets along with everyone.

But wait, there’s more! NVIDIA users, rejoice! Gemma 2 is optimized with NVIDIA TensorRT-LLM, ready to run on NVIDIA-accelerated infrastructure or as an NVIDIA NIM inference microservice. And for the NeMo fans out there, optimization is on the horizon.

Gemma 2: The Gift That Keeps on Giving

Here’s where things get really exciting. Gemma 2 isn’t just a static model – it’s a springboard for innovation. With its commercially-friendly license, developers and researchers can take Gemma 2, tweak it, fine-tune it, and even commercialize their creations. It’s like getting a high-performance sports car and being told, “Go ahead, mod it however you like!”

To help you hit the ground running, there’s the new Gemma Cookbook. Think of it as your personal AI recipe book, filled with practical examples and step-by-step guides for building applications and fine-tuning Gemma 2 for specific tasks. Whether you’re into retrieval-augmented generation or have something more exotic in mind, the Cookbook has got you covered.

The Responsible AI Angle: Because With Great Power Comes Great Responsibility

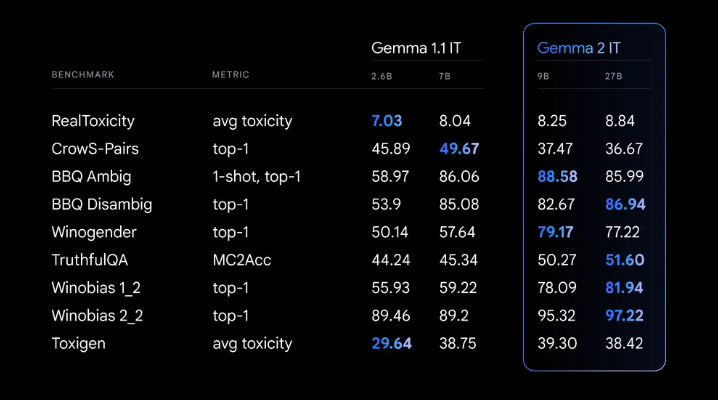

Now, let’s address the elephant in the room – AI ethics and responsibility. Google isn’t just throwing Gemma 2 out into the wild without a care. They’ve baked in significant safety advancements and followed robust internal safety processes during training. We’re talking data filtering, rigorous testing, and comprehensive evaluations to identify and mitigate potential biases and risks.

But they’re not stopping there. Google is actively working on open-sourcing their text watermarking technology, SynthID, for Gemma models. And for those of you who like to peek behind the curtain, they’re publishing results on a wide array of public benchmarks related to safety and representational harms.

Gemma 2 in Action: From Theory to Practice

Enough with the tech specs – let’s talk real-world impact. Since the launch of the original Gemma, we’ve seen over 10 million downloads and a plethora of inspiring projects. Take Navarasa, for instance – they leveraged Gemma to create a model that celebrates India’s rich linguistic diversity.

With Gemma 2’s enhanced capabilities, we’re on the cusp of seeing even more groundbreaking projects. The potential applications are limited only by your imagination – from natural language processing tasks that push the boundaries of human-AI interaction, to complex problem-solving models that could revolutionize industries.

The Road Ahead: Gemma 2 and Beyond

But hold onto your hats, folks, because this is just the beginning. The Google team is already hinting at future developments, including an upcoming 2.6B parameter Gemma 2 model. This little powerhouse aims to bridge the gap between lightweight accessibility and potent performance, potentially bringing advanced AI capabilities to an even wider range of devices and applications.

Getting Your Hands on Gemma 2: It’s Easier Than You Think

Now, I know what you’re thinking – “This all sounds great, but how do I actually get my hands on Gemma 2?” Well, my friends, Google has made it surprisingly easy. You can take Gemma 2 for a spin right now in Google AI Studio, testing out its full 27B parameter glory without worrying about hardware requirements.

For those who prefer to tinker locally, you can download the model weights from Kaggle and Hugging Face Models. And if you’re a Google Cloud aficionado, keep an eye out for Gemma 2 on Vertex AI Model Garden – it’s coming soon.

But wait, there’s more! (I feel like an infomercial host, but the offers just keep coming!) Google is offering free access for research and development through Kaggle or via a free tier for Colab notebooks. And if you’re a first-time Google Cloud customer, you might be eligible for a cool $300 in credits.

Calling All Academic Researchers!

Hey, all you brilliant academic minds out there! Google hasn’t forgotten about you. They’ve set up the Gemma 2 Academic Research Program, offering Google Cloud credits to supercharge your research with Gemma 2. But don’t dawdle – applications are open now through August 9.

Wrapping Up: The Gemma 2 Revolution

It’s not just an AI model; it’s a game-changer, a paradigm shifter, and quite possibly the coolest thing since sliced bread (at least in the AI world).

From its knockout performance and efficiency to its flexibility and ease of use, Gemma 2 is setting a new standard for open-source AI models. Whether you’re a seasoned AI researcher, a curious developer, or just someone who gets excited about cutting-edge tech, Gemma 2 has something to offer.

As we stand on the brink of this AI revolution, one thing is clear – the future of AI is open, accessible, and more exciting than ever. So, what are you waiting for? Dive in, experiment, create, and who knows? Your Gemma 2-powered project might just be the next big thing in AI.

Remember, in the world of Gemma 2, the only limit is your imagination. So dream big, code boldly, and let’s see what amazing things we can build together. The Gemma 2 era has begun – are you ready to be part of it?